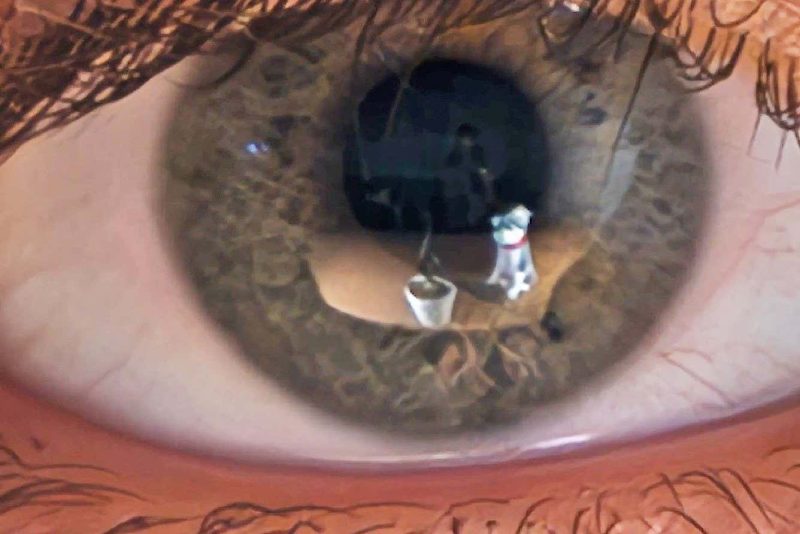

Researchers at the University of Maryland, College Park, have developed a computer vision model that can reconstruct 3D images of a scene by analyzing the reflections on a person’s eyeballs.

The Method

The model utilizes a technique called neural radiance fields (NeRF) which uses neural networks to determine the density and color of objects in the scene. Typically, NeRF directly looks at the scene itself, but in this case, the model looks at the reflections in a person’s eyes. The researchers take between five and 15 photographs of an individual’s face from different angles while they look at the scene. By extrapolating from a small area on the eyes, the model can produce “reasonable” results in replicating real-life objects.

The Challenges

The reconstructed images are often blurry due to the difficulty of accurately rendering the shape of the cornea, which is the clear outer layer at the front of the eye. While the technique was able to identify the rough shape of objects in the eyes of Miley Cyrus and Lady Gaga in music videos, it struggled to reconstruct details.

Building on Previous Research

The idea of using the cornea as an approximation of a curved mirror to create panoramic images was initially explored by researchers at Columbia University in the mid-2000s. This new work expands on that concept by focusing on 3D reconstruction. The results have been seen as impressive and have raised concerns about the level of detail that can be obtained from high-resolution photographs.

Overall, this research showcases the potential of using reflections in the eyes for creating 3D models of surroundings. It opens up possibilities for various applications in computer vision technology.